FAIR Maturity Evaluation of Nordic and Baltic data repositories

Science is one of the greatest collective endeavors and the most important channel for gaining knowledge. Scientific research is the collecting of data to investigate and explain a phenomenon or theory with the help of the scientific process. The idea of science is that you can only learn about a phenomenon reliably and accurately by collecting empirical data. What role does science and research play in society? Research is also critical to societal development. It generates new knowledge, improves education, increases the quality of our lives, and helps in decision-making to name a few examples.

A crucial premise for research, therefore, is to ensure transparency of the data, enable the reproducibility of research results, and to build trust in the results and the scientific method. However, a major caveat is that research data is largely inaccessible to others. Speaking at the World Economic Forum in Davos, EC President Ursula von der Leyen, mentioned that 85% of research data is never used. Some studies indicate that only about 20% of data generated by peer-reviewed research are depositing the data in suitable repositories (10.1371/journal.pone.0194768). Another warning sign is that “Data preparation accounts for about 80% of the work of data scientists” (Forbes, 2016). Similar findings by R&D divisions in the private sector support this claim. If correct, this has the substantial implication that research is tremendously ineffective. To address this and the former problem of building transparency, reproducibility, and trust in science it is necessary to share data in a way that makes the data findable, accessible, interoperable, and reusable. In a word – FAIR.

The EOSC-Nordic project has pledged to implement FAIR in the Nordic and Baltic regions. This will be achieved by disseminating the benefits of going FAIR to a broad scientific community, providing an evaluation-based recommendation on how to FAIRify a specific data repository, and supporting communities to become more FAIR by hosting dedicated community events that address specific FAIRification challenges.

The FAIR Maturity evaluation of data repositories was scored according to their compliance to certain machine-actionable generic metadata, as measured by the FAIR Maturity Evaluation Service (Wilkinson et al. 2019). Machine-actionable means that a ‘bot’ or a harvester (“the machine”) can understand what kind of data repository it is interacting with, including what kind of data is hosted (e.g. research domain, formats), what the usage license is, provenance details, external links to other FAIR digital objects to name a few. In short, the use of machine-actionable metadata and data is ultimately intended to obtain the goal “the machine knows what I mean”.

The goal of the evaluations is to demonstrate the benefits of machine-actionable metadata and raise awareness of FAIR for data repositories. One of the primary goals of our effort is to monitor the evolution of FAIR metrics for individual data repositories to trace the (positive) development of FAIRer research data and, if possible, to gauge its impact on data reuse and scientific quality.

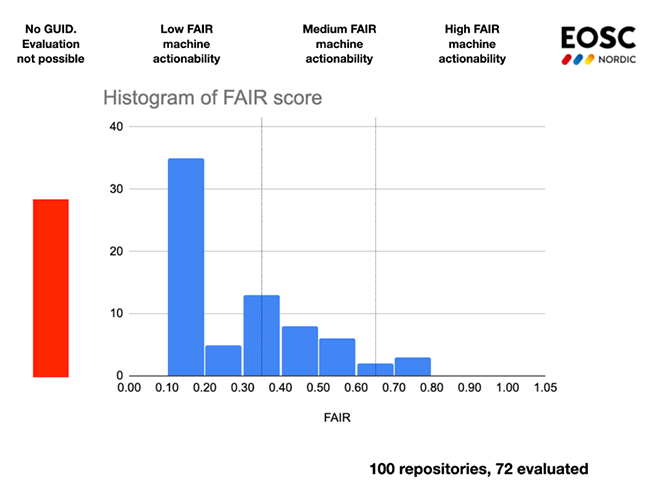

In the sample of repositories, we have excluded repositories that only host publications (typically pre-prints or reprints) and those that have no obvious potential use in science. This yielded 100 repositories associated with one or more of the Nordic + Baltic countries. Among these repositories, 28 were discarded on account of not providing a Globally Unique Identifier (GUID) to individual datasets. The remaining 72 repositories were evaluated using approximately 720 datasets and the resulting FAIR scores are shown in Figure 1. The total execution time for the evaluations was a little over 4 days.

Figure 1: The results of 100 Nordic+Baltic repositories evaluated for FAIR Maturity, of which 28 repositories could not be evaluated due to lack of GUIDs for datasets. A majority of the 72 evaluated repositories score in the low category, meaning they do not support machine-actionable metadata.

Read more on the background for the FAIR Maturity evaluation and the recommended actions.